Neonatal Opioid Withdrawal is a major public health problem in the world. Large numbers of infants born after fetal exposure to opioids experience unpleasant symptoms, requiring non-pharmacologic and pharmacologic intervention.

Studies of different approaches for symptom control have the potential to improve outcomes for this fragile population.

To go back in the history a bit, the Finnegan scale was developed as a research tool, it is fairly cumbersome, with 21 items, several of which are rather subjective, and some are frequent in babies without opioid exposure. The threshold for treatment, of a score of 8, was entirely arbitrary, and has never been adequately tested or compared to other thresholds. It was developed in 1975, when the standards now required for development of such tools were not in place.

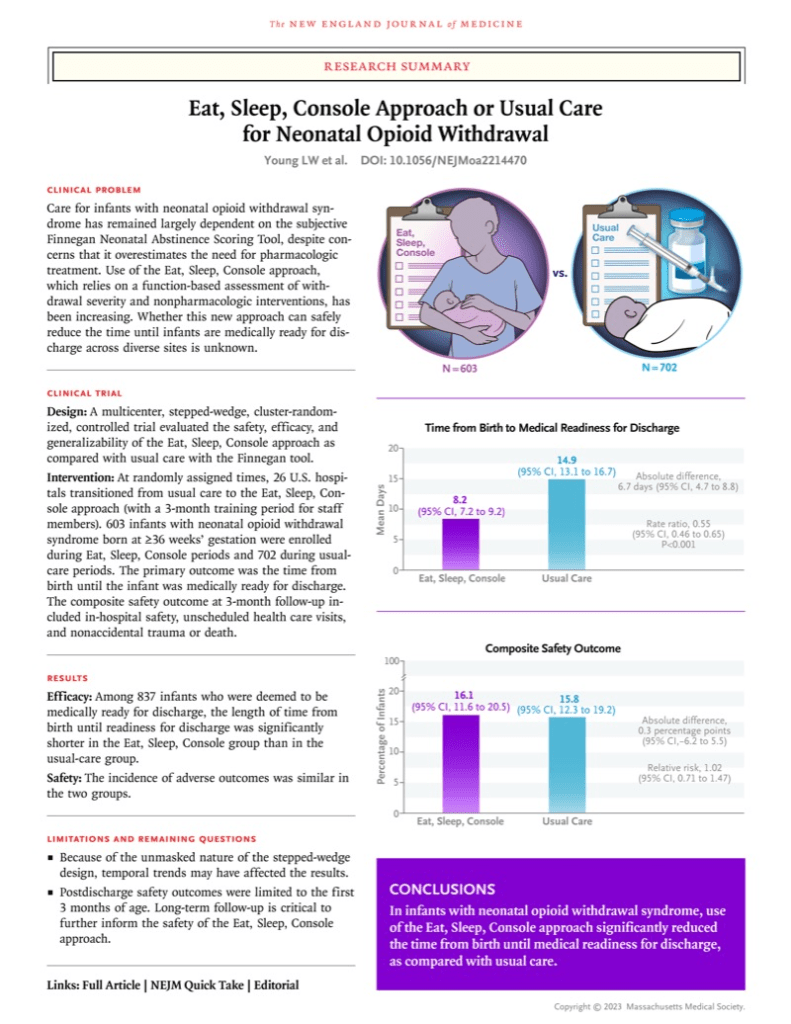

A big advance in the management of NOWS was the development of a different system; the ESC system, rather than being a “score” is a method of evaluation of an infants ability to function normally . Babies are assessed on their ability to breastfeed successfully, or eat at least 30 mL per feed, to sleep uninterrupted for at least 1 h, and to be consoled within 10 min. If any of these conditions are not met, non-pharmacological soothing methods are instituted, and eventually medications may be introduced. Rather than regular timed Finnegan scoring, the ESC system is a continuous longitudinal evaluation allowing intervention as soon as an infant is unconsolable, or feeds poorly, or has sleep interruption.

Large trials have shown that infants evaluated and treated according to ESC have shorter hospitalisations without an increase in adverse effects. Young LW, et al. Eat, Sleep, Console Approach or Usual Care for Neonatal Opioid Withdrawal. N Engl J Med. 2023.

Infants managed with ESC have much lower opioid exposure in the neonatal period, and nevertheless have no increase in adverse outcomes in the short or medium term.

I can’t see any justification for continuing to use the Finnegan score for these infants. Which made me a little surprised to see some of the data in this new publication. Devlin LA, et al. Symptom-Based Dosing for Neonatal Opioid Withdrawal: The OPTimize NOW Randomized Clinical Trial. JAMA2026.

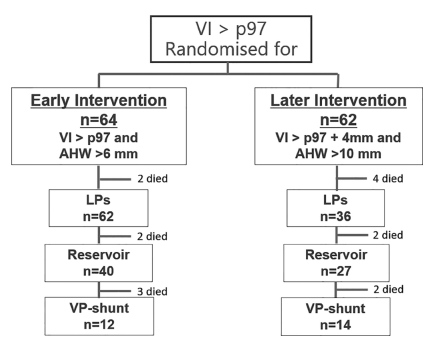

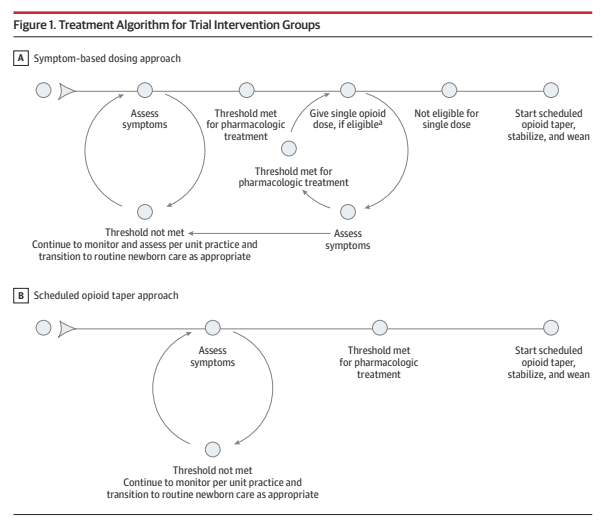

One of the questions about the ESC approach was exactly when drug therapy is indicated, and how to manage it. This RCT compared 2 different approaches, shown diagrammatically below. This was a cluster randomized trial, with individual centres being the clusters, they were randomized to start with the symptom-based dosing approach to pharmacologic therapy, and then switch after 5 months to the scheduled opioid taper approach for a second 5-month period; or to commence with approach B and then switch to approach A.

As you can see the initial evaluation and intervention is the same in both groups. In the standard tapering, “B”, group, once the infant had symptoms which breached the threshold for pharmacologic treatment, they were immediately started on regular drug dosing, which was then adjusted and weaned progressively. In the A group, the symptom-based dosing group, an infant who was over the treatment threshold would receive a single dose of whichever was the hospitals usual approach for such an infant (morphine, buprenorphine or methadone) and then, if their symptoms improved, they would go back to monitoring without routine repeated doses. If they needed more than 2 consecutive doses at either 2, 3 or 4 hour intervals, they were started on regular dosing and then weaned after they were stabilised.

At least I think that was the protocol, because the figure has a note which states “Infants were eligible if they received less than 3 doses in the preceding 24-hour period and fewer than 2 consecutive short-interval doses” But fewer than 2 means 1, and I don’t know how you can have 1 consecutive dose? Similarly less than 3 doses means either 1 or 2 doses, which is actually not what is in the protocol.

The protocol, attached as a supplement, states that the infants were allowed up to 3 doses (not less than 3) in a 24 hour period, and if they needed a 4th dose, then they switched to regular dosing and weaning. The precise wording in the protocol is “if a 4th dose is required within any 24 hour period, the study site will move to scheduled opioid treatment per the algorithm used at the study site…”.

I think the note by the figure should say “less than or equal to 3 doses in the preceding 24-hour period, and less than or equal to 2 consecutive short-interval doses”. That would be then consistent with the text of the article which states “Each time the threshold for treatment was met, an as-needed dose was given as long as the infant had not already received 3 opioid doses in the preceding 24-hour period or 2 consecutive short-interval doses”.

In other words, I think what that means is that if an infant was not eating, or sleeping, or consolable, they would receive a dose of morphine (or an alternative). If 2 hours later they were still in that state, they would receive another morphine dose; but if they needed another one 2 hours later they were started on regular morphine, with a progressive wean once the symptoms were under control.

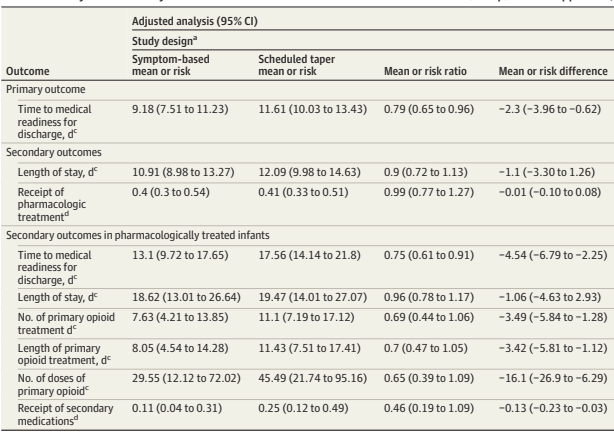

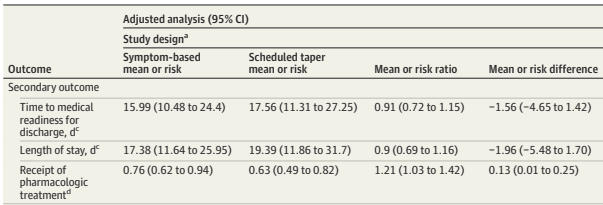

The primary outcome for the trial was the time until medical readiness for discharge, which meant more than 96 hours of age and more than 48 hours since the last opioid dose, or when the medical provider discharged them, whichever was first. This primary was only used for the babies cared for with the ESC approach, which was used in 15 hospitals, with 8 using the Finnegan score.

This is my first question “why on earth would any centre still be using the Finnegan for term babies with NOWS?” Especially a centre with enough babies, and enough academic interest, to get involved with this trial? None of the reviews I have read, or the studies that have been performed, show any advantage to the Finnegan based system. Infants treated using the Finnegan system receive more opioids, and stay in hospital much longer, with no decrease in adverse outcomes.

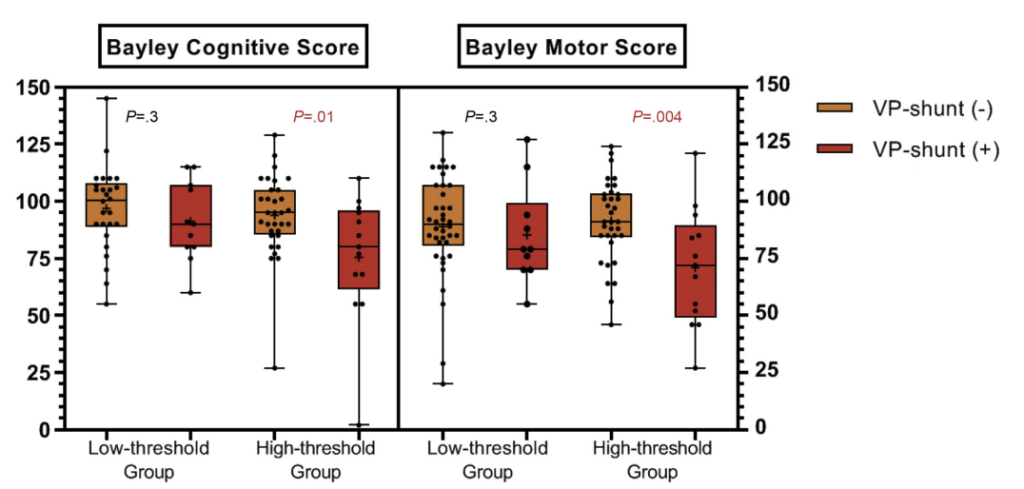

In the ESC centres there were a total of around 190 infants treated by each approach. The length of hospitalisation was 2.5 days shorter in the symptom-based group. The same proportion of infants received at least one dose of medication (40% in each group), but, among that subgroup, the number of doses was much less, duration of opioid treatment was 3 days shorter, and many fewer received secondary medications (which could be either phenobarbital or clonidine)

The results in the Finnegan hospitals confirm the much longer hospitalisation with the out-dated approach, the Symptom-based approach led to 1.5 days shorter hospitalisation, but stay was more variable, and the difference may have just been a random effect.

Just getting these babies home (or at least discharged from hospital) earlier isn’t, of course, the only important outcome. Safety outcomes were similar between groups, with the rare primary, critical safety adverse outcomes occurring only in the scheduled taper group, n=3, not significant. Hospital re-admissions and ER visits were also similar, but numerically less frequent in the symptom-based group.

This seems to me to be another major step forward in treatment of this condition. Infants with NOWS should clearly be managed with the ESC approach, and if they cannot E or S or C, then intermittent opioid dosing can be used without immediately starting a regular regime.

I don’t understand why phenobarbital is still being used as a secondary medication, it has numerous secondary effects, and even the sedation is short lived, with longer treatment leading to hyperactivity. Clonidine makes much more sense. I don’t know if we even need an RCT of phenobarbital, as its use doesn’t seem to have a rational basis. But trials of the appropriate threshold for introduction of clonidine could be valuable.